LLM Seeding: The Complete Guide to Getting Your Brand Cited in AI Search Results (2026)

AI-referred visitors convert at up to 18%. Roughly 4–10x higher than organic search. Yet AI citations still account for less than 2% of total web traffic. The brands who master LLM seeding now are locking in compounding authority before the channel saturates. This guide tells you exactly how.

Your competitor just appeared in a ChatGPT answer. You didn't. That's not a coincidence, and it's not luck. It's the result of a deliberate strategy called LLM seeding: the practice of engineering your brand's presence across the web so that large language models cite you when users ask the questions that matter to your business. LLM seeding is a specific part of your overall Answer Engine Optimization strategy.

The stakes are shifting fast. LLMs cite only 2–7 domains per response, compared to Google's 10 blue links + more behind. The competition for those slots is intensifying, and once a model establishes a trusted source, it tends to reinforce that choice across related prompts. This is winner-takes-most dynamics baked into the model's parameters. If you're not in those answers today, you're training users to associate your category with someone else's brand.

This guide covers everything: how AI models actually decide what to cite, the strategies that consistently earn mentions, the platforms that carry the most weight, and how to measure whether any of it is working.

What Is LLM Seeding?

LLM seeding, a part of the overall Answer Engine Optimization (AEO) strategy, is the practice of strategically distributing accurate, authoritative, and machine-readable content across the web so that AI models like ChatGPT, Claude, Perplexity, and Google's AI Overviews incorporate your brand into their generated responses.

The term breaks down simply:

- LLM (Large Language Model): The AI technology behind conversational tools like ChatGPT, Claude, Perplexity, and Gemini

- Seeding: Planting your brand information in the soil where AI grows its answers: training data, trusted web sources, authoritative platforms

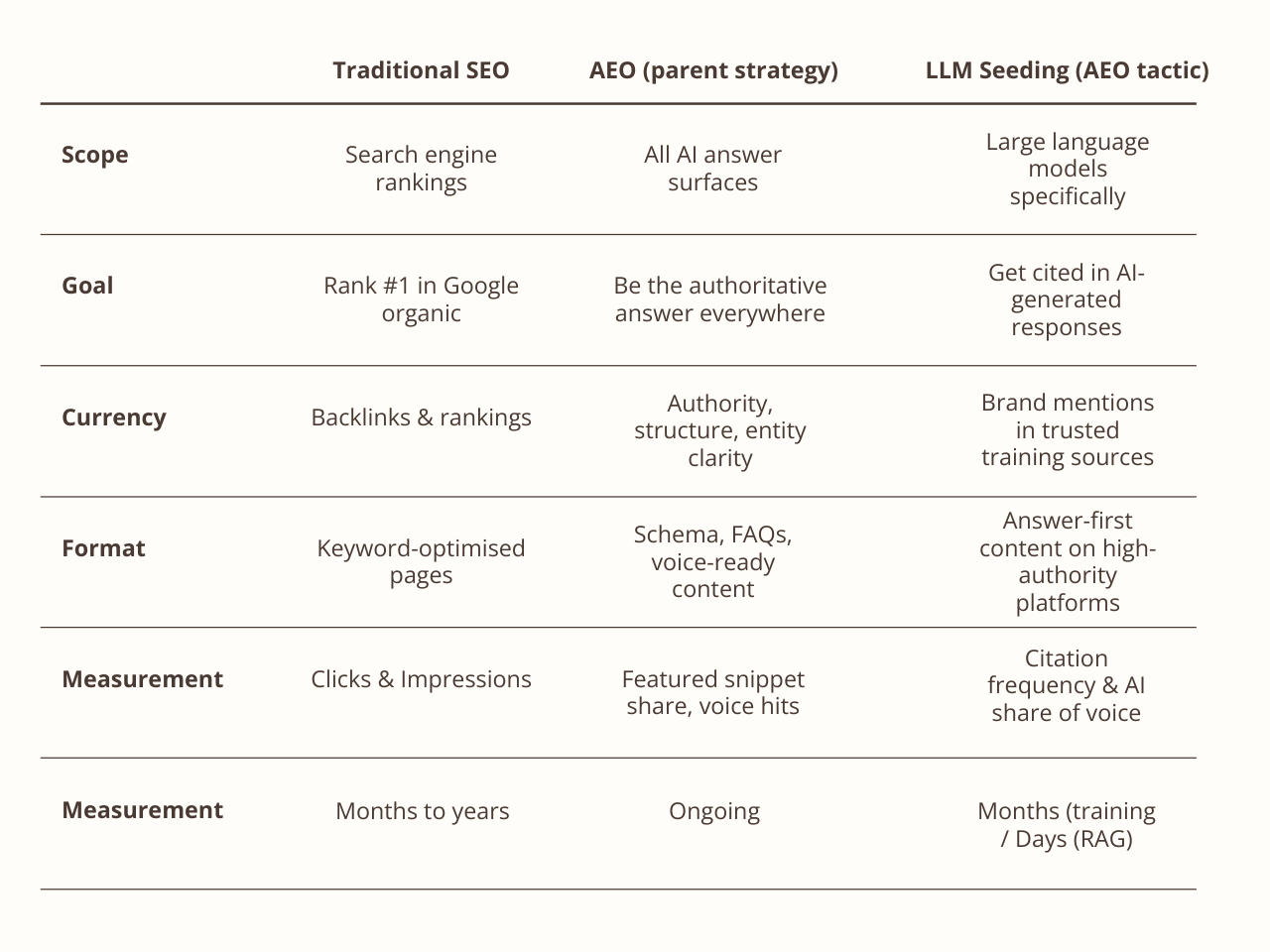

Traditional SEO asks: How do I rank on page one? LLM seeding asks: How do I become the source an AI trusts enough to quote? These are meaningfully different questions with meaningfully different answers. The table below captures the core distinction:

How AI Models Actually Discover and Cite Your Brand

Understanding why AI cites certain brands requires understanding the two fundamentally different ways LLMs access information.

Parametric Knowledge (Training Data)

This is everything the model "baked in" during training. A vast crawl of the web, books, Wikipedia, Reddit, and countless other sources. Approximately 22% of major AI training data comes from Wikipedia alone. This knowledge is static, fixed at training cutoff, and accessed in milliseconds without any external call. Entities mentioned frequently across authoritative sources during training develop stronger neural "representations", making them far more likely to surface in responses. Around 60% of ChatGPT queries are answered purely from this parametric memory, with no live web lookup triggered at all.

What this means for you: If your brand, product, or expertise isn't woven across multiple authoritative sources before a model's training cutoff, you are effectively invisible to the majority of queries that model will ever answer.

Retrieved Knowledge (RAG — Retrieval-Augmented Generation)

The other 40% of the time, modern AI systems actively retrieve real-time information. The user's query gets converted into vector embeddings, relevant documents are retrieved and re-ranked, and the model synthesizes a response from those sources. This is how tools like Perplexity, Google AI Overviews, and ChatGPT Search operate when they browse the web in real time.

What this means for you: For real-time retrieval, recency, crawlability, structured data, and domain authority matter enormously. A well-structured page on a trusted domain published last month can outperform a buried 3-year-old article, even from a bigger brand.

The Three Signals That Drive AI Citation

Across both pathways, three factors consistently separate cited brands from invisible ones:

Consistency. Your brand information appears with the same accurate details across multiple trusted sources. Contradictory or sparse information trains models to be uncertain, and uncertain models hedge by not citing.

Structure. Content uses clear headings, defined terms, FAQ formats, schema markup, and lists that AI can parse without ambiguity. Research shows that 44.2% of all LLM citations come from the first 30% of an article, the intro. Lead with answers.

Authority. Third-party mentions, reviews, Wikipedia presence, and citations from established publications signal credibility. Brand search volume, not backlinks, is the strongest predictor of AI citations, with a 0.334 correlation, higher than the 0.255 correlation between referring domains and organic rankings.

Why the Numbers Demand Your Attention Now

The urgency around LLM seeding isn't hype. The data tells a specific, compelling story.

The traffic is small but explosive. AI referral traffic currently represents less than 2% of total web traffic. But AI-sourced traffic grew 527% year-over-year between January and May 2025. According to Semrush, AI search traffic is projected to surpass traditional search by the end of 2027. If you include AI Overviews the web traffic number is much higher. It is also likely that the number of overall searches has increased significantly. A lot of searches are done on LLMs and never result in a click-through.

The quality is extraordinary. Research analyzing 1,200+ publisher sites found that LLM-referred visitors converted to sign-ups at 1.66%, compared to just 0.15% from traditional search. A separate study found AI-driven visitors convert at 4.4x the rate of organic search visitors. Why? Because when an LLM cites you, the user has already been through a competitive filter. The AI has compared you to alternatives, found you credible, and presented you as the answer. They arrive pre-educated and pre-qualified.

The zero-click reality. 60% of searches now end without any click. Even when users never visit your site, being named in an AI response builds brand familiarity and drives direct searches later. The correlation between AI chatbot mentions and brand search volume is 0.334, higher than the link-to-ranking correlation that SEO has relied on for two decades.

The playing field is levelling. Almost 90% of ChatGPT citations come from pages ranking in position 21 or lower in Google, meaning AI doesn't reward the same sites that dominate traditional search. A well-structured article on page 4 of Google can outperform a competitor's top-ranked page if it provides better, more structured answers.

The competitive window is open, but closing. Only 16% of brands systematically measure AI search performance as of late 2025. The majority of your competitors are optimizing blindly, or not at all.

The LLM Seeding Strategy Framework

Step 1: Map Your Prompts Before You Write a Word

Before producing any content, you need to understand how your customers are querying AI tools. Not how they're querying Google. AI queries are conversational, contextual, and specific: "What's the best GDPR-compliant payroll system for a 50-person European startup?" is an AI query. "payroll software Europe" is a Google query. It is important to mirror the conversational nature once they land on your webpage which its why it is vital to enable chat on your website to continue that conversational context.

Build a prompt map: list every question a potential customer might ask an AI assistant at each stage of their buying journey: awareness, consideration, decision, and post-purchase. Think in user intent, not just keywords. The goal is to understand which conversations your brand needs to be a natural part of. For a practical approach, manually query ChatGPT, Le Chat (Mistral), Perplexity, and Claude with industry questions and study the patterns in who gets cited and how.

Step 2: Publish Authoritative, Answer-First Content

The single most important content principle for LLM seeding: lead with the answer, then explain it. AI models heavily favor content where the main claim appears in the first paragraph. After that, support it with specifics: statistics, comparisons, named examples, and expert perspective.

A key tactic here is semantic chunking. Semantic chunking is organizing your content into short, clearly labelled sections that each focus on a single idea or answer. Chunked content with natural-language headers is far easier for AI to parse, extract, and cite. Use a consistent layout for each section; repeatable structure signals credibility and makes your content predictable enough for AI to rely on.

Structure every piece with:

- A direct answer to the primary question in the opening paragraph

- Clear H2/H3 headings that mirror how a user might phrase the question

- Short, semantically self-contained sections (semantic chunking)

- Specific, verifiable facts (dates, percentages, company names, study sources)

- A TL;DR or key takeaway summary. Models consistently lift these

- FAQs that match the conversational phrasing of real AI prompts

Avoid vague, hedge-heavy language. AI models treat your content like a very literal reader. "Industry-leading solutions that transform business outcomes" provides nothing extractable. "The tool reduces customer response time by 40% in under 30 days, based on data from 200 deployments" does.

Step 3: Build a Web of Consistent Third-Party Mentions

Your own website is one voice. AI builds confidence from a chorus. The goal is consistent, accurate brand information spread across many trusted, independent sources.

PR and media outreach: A single mention on a respected industry publication carries more citation weight than a dozen posts on your own blog. Brands in the top 25% for web mentions get 10x more AI visibility than others. Pursue contributor articles, expert quotes, and product features in trade media. Tools like HARO (now Connectively) or Featured.com can help you find journalist requests to contribute to.

Wikipedia: Given that roughly 22% of major AI training data comes from Wikipedia, a neutral, well-sourced Wikipedia presence is one of the highest-leverage moves available to established brands. Follow Wikipedia's editorial standards rigorously. Any appearance of promotional content will result in deletion and may hurt your brand's perceived credibility with AI systems.

Structured reviews: Reviews on G2, Capterra, Trustpilot, and Google Business Profile create user-generated content that AI retrieves for commercial queries. Specificity matters enormously here. A review that says "Reduced our support ticket volume by 35% in the first quarter" is citation-worthy. "Great product, highly recommend" is noise. Prompt your best customers with specific questions: "What problem did this solve, and what measurable result did you see?"

Step 4: Maintain Semantic Consistency Across All Channels

This step is frequently overlooked but critically important. AI models build associations between brands and topics based on patterns in data. If your messaging is broad, shifting, or inconsistent across channels, like different positioning on your blog versus your LinkedIn versus your press releases, those patterns become weak or contradictory, and the semantic link between your brand and your core topic suffers.

Pick your three to five most important thematic associations. The topics you most want to own in AI responses. And reinforce them consistently everywhere: on your site, in your contributed articles, in community posts, in PR quotes, and in your social content. The more consistently you appear in the same contexts with the same framing, the stronger and more reliable your brand's entity representation becomes in training data.

Step 5: Claim and Optimize Your Knowledge Graph Presence

AI models have a concept of "entity". A distinct, recognizable thing in the world. Strong brands have strong entity representations: consistent name, consistent attributes, consistent associations across dozens of sources. Weak entities are ambiguous, contradictory, or sparsely represented.

To strengthen your brand's entity:

- Ensure your Google Business Profile is complete, accurate, and regularly updated

- Maintain consistent NAP (Name, Address, Phone) information across all platforms

- Use structured data markup to formally define your brand's attributes

- Build connections between your content and established entities (industry terms, recognized tools, named frameworks) so AI can contextualize your brand within its existing knowledge graph

Step 6: Publish Proprietary Data and Original Research

One of the most powerful LLM seeding tactics is owning a unique data point that no one else can replicate. When an LLM discovers a unique fact, it returns to that source repeatedly because no alternative source can satisfy the same query.

Conduct mini-surveys, analyze your own customer data, or commission third-party research. Present findings with proper attribution: date, methodology, sample size. This signals to AI that the data is verifiable and citable, the two things it needs before including a claim in a response.

The Best Platforms for LLM Seeding

Not all content channels reach AI models equally. Prioritize based on the two pathways (training data vs. real-time retrieval):

High-authority publishing platforms (LinkedIn, Medium, Substack): Frequently crawled, high domain authority, and indexed quickly. LinkedIn articles are tied to real professional profiles, which gives them a credibility signal LLMs respond to. Medium's minimalist, semantic structure is highly LLM-readable. Substack suits thought leadership and newsletter-style content. Its emphasis on editorial voice and topical depth adds authority signals.

Industry publications and earned media: Niche authority sites carry disproportionate weight when AI is looking for expert sources in a specific vertical. A mention on a respected industry blog outperforms broad general-interest coverage for targeted queries. Getting featured in "best of" roundups; newsletters, blog posts, curated lists, is particularly high-leverage because these formats are among the most frequently cited by LLMs.

Review and comparison platforms (G2, Capterra, Trustpilot): Heavily cited when users ask AI for tool recommendations. These platforms are trusted by default because they aggregate third-party user experiences rather than brand-produced content. Prioritize detailed, specific reviews from real customers.

Reddit and Quora: According to Semrush, Reddit is cited more than any other single source in LLM responses. Quora is the most commonly cited website in Google's AI Overviews. Both reward specific, expert-driven, helpfully formatted contributions. Not promotional content.

YouTube: YouTube citations in LLM responses have been increasing noticeably throughout 2025, as AI systems improve at extracting value from transcripts, captions, and descriptions. Optimize video titles, descriptions, and captions with the same principles applied to written content.

GitHub Discussions: For technical brands, GitHub community discussions are a powerful and underused seeding channel. Participating in relevant threads, answering questions, and contributing fixes builds credibility that AI picks up on for developer-focused queries.

Editorial microsites: A standalone, editorially-structured microsite, built around your industry, not just your product, can carry more citation credibility than a branded company page. IKEA's Life at Home research site is a good example: it publishes original data on homelife and happiness that connects to its brand without being a product catalogue. Structure it like an independent publication, include author bios, cite your sources, and make your editorial policies visible.

Your own site (with technical AEO): The foundation. Even if AI doesn't always cite your domain directly, a well-structured, authoritative site strengthens your brand's entity representation and is frequently retrieved via RAG.

Content Formats That Earn Citations

The format of content matters as much as the substance. Some structures are simply easier for AI to extract, paraphrase, and cite.

"Best of" lists with transparent methodology are among the highest-performing formats for LLM citation. But they need to go beyond a simple ranked list. Explain how you selected the items. Your testing process, your criteria, your scoring methodology. LLMs prioritize content that shows transparent, well-reasoned decision-making. Give each item a targeted "best for" label that mirrors real query language ("best for freelancers on a budget," "best for enterprise teams"), because AI quotes these phrases directly when matching responses to specific user contexts. Use a consistent layout for each entry: name, summary, key features, pros and cons, pricing.

First-person product reviews with measurable outcomes are citation-worthy because they include the three things AI trusts most: real testing, repeatable methodology, and specific quotable conclusions. Include how many items you tested, who did the testing, when it was conducted, and what criteria you used. Write short, declarative sentences that balance positives and negatives; "It's the best choice for teams under 20 people, but lacks the advanced reporting features enterprise users need" is vastly more extractable than a paragraph of flowing prose.

FAQ-format content mirrors the exact structure of AI queries. The question-and-answer format is essentially pre-formatted for extraction. Every key page on your site should include an FAQ section targeting the specific questions users ask AI tools. WordPress plugins like RankMath and Yoast can automatically add FAQPage structured data to these sections, improving parse rates further.

Comparison tables are consistently pulled into decision-oriented queries. Go beyond feature comparisons. Include use-case verdicts ("best for X"), clear tradeoffs, and citation-ready phrasing that tells AI exactly which option is right for which user type.

Numbered lists and step-by-step guides are easy for models to parse and cite as sequential reasoning. A "How to..." guide with numbered steps is more citation-friendly than flowing prose covering the same topic.

Opinion-led pieces with clear takeaways earn citations when they stake out a genuine, well-supported position. A contrarian industry opinion or a data-backed prediction, especially from a named, credential author, gives AI something distinctive to reference. Structure it with defined sections and explicit summary takeaways so the core argument is easy to extract.

Named expert opinions and E-E-A-T signals add credibility that AI recognizes. Include identified authors with bios, direct quotes from named professionals, and attributions to specific research. Content with transparent authorship (Experience, Expertise, Authoritativeness, Trustworthiness) consistently outranks shallow material in AI responses.

Case studies with specific outcomes. The more specific, the more citation-worthy. Quantified results ("reduced response time from 4 hours to 22 minutes") give AI extractable, verifiable facts it can include with confidence.

Tools, templates, and frameworks attract citations because they solve specific problems that users reference repeatedly. When Perplexity is asked "how do I check keyword rankings for free?" it recommends specific tools by name. Give your resource a descriptive title that matches how users search, include an intro that explains who it's for and how to use it, and add FAQs or use-case examples so AI understands its context and value.

How to Track AI Brand Visibility

Measuring LLM seeding success requires a different toolkit than traditional SEO analytics. The channel is newer, and the signals are less standardized, but the measurement landscape is developing quickly.

The GSC Signal: Rising Impressions, Falling Clicks

One of the clearest early indicators of LLM influence is a specific pattern in Google Search Console: impressions increasing while clicks decrease. This happens because users see your brand named in an AI response, make a mental note, and then search for you directly days or weeks later, bypassing the organic click entirely. The result is declining organic click-through rates paired with stable or growing branded searches and direct traffic.

To spot it: open Google Search Console and compare impressions versus clicks over the past 3–6 months. Then cross-reference with Google Analytics. If direct traffic is growing while organic clicks are declining, LLMs are likely influencing awareness. This is a positive signal, not a problem.

Manual Prompt Testing (Free)

The most direct method: regularly ask AI tools the questions your customers ask and observe whether your brand appears in responses. Use a private or incognito browser to avoid personalization skewing results. Test across ChatGPT, Perplexity, Claude, and Google AI Overviews. The same brand can see citation rates range from under 1% on one platform to over 27% on another, making multi-platform testing essential.

Build a prompt bank of 20–30 representative queries at different funnel stages. Run each monthly and document: which tool was used, the exact prompt, whether your brand appeared, where in the response it featured, the sentiment and framing, and which competitors were cited alongside you. This data becomes your baseline for tracking improvement over time.

AI Monitoring Tools

Otterly.ai (https://otterly.ai). Tracks AI-generated citations and brand mentions across ChatGPT, Perplexity, and other tools

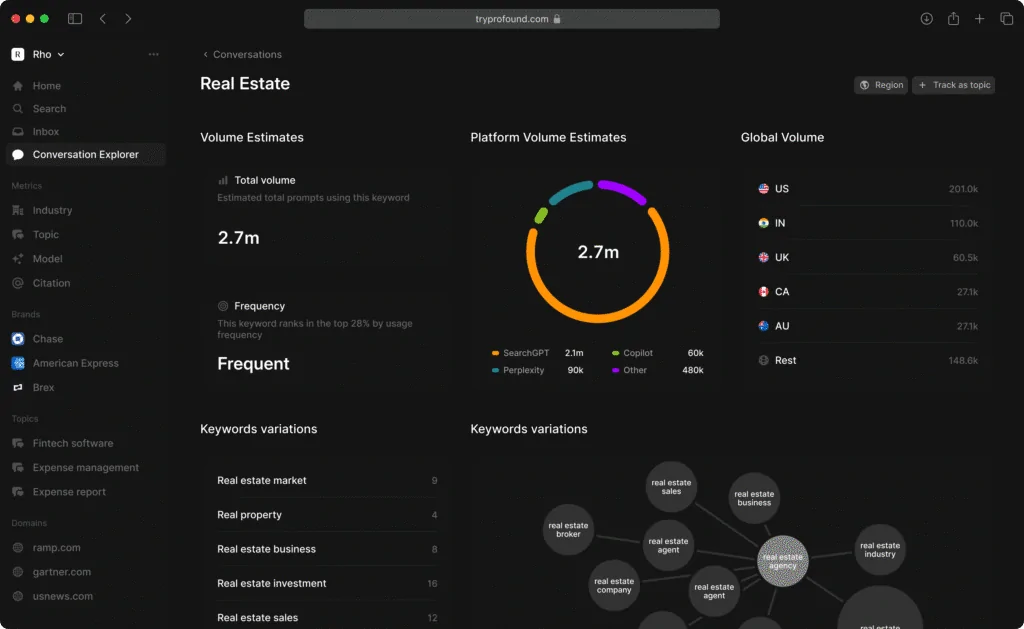

Profound AI (https://www.tryprofound.com). Enterprise-grade platform; tracks LLM search volume, citation frequency, and bot traffic analytics

Semrush AI Visibility Toolkit (https://www.semrush.com/ai-seo/). Add-on to existing plans: Tracks brand performance, share of voice, and sentiment across ChatGPT, Perplexity, and Google AI Mode; includes competitor benchmarking

PromptMonitor (https://www.promptmonitor.ai). Monitors brand mentions across major LLM platforms

Analytics Configuration

Configure your web analytics to properly capture AI referral traffic. Many platforms currently misattribute LLM traffic as "direct",meaning your data likely underestimates AI-driven visits significantly. Add custom channel groupings that capture traffic from ChatGPT, Perplexity, Copilot, and Gemini referral domains. Track this traffic separately and compare its conversion rate against organic and direct channels.

The Five Metrics That Matter

Beyond rankings, focus on these GEO-specific KPIs:

- Citation Frequency: How often your brand appears in AI responses for target prompts

- Brand Visibility Score: Percentage of target prompts where you receive a mention

- AI Share of Voice: Your citations as a proportion of total citations in your category

- Sentiment Score: How the AI frames your brand (positive, neutral, negative)

- LLM Conversion Rate: Revenue or lead outcomes attributed to AI-referred traffic

Common Pitfalls and Realistic Expectations

Timelines are longer than SEO. Content optimized for training data may take several months to influence a model's parametric knowledge. Real-time retrieval tools like Perplexity show results faster. Plan for a 3–6 month horizon before expecting consistent citation changes for training-based models. LLM seeding resembles brand-building and thought leadership more than performance marketing — it compounds slowly, then accelerates.

You influence, not control. AI responses are probabilistic. There's less than a 1-in-100 chance that ChatGPT will produce the same list of brands in any two responses to identical prompts. You can dramatically increase the likelihood of being cited; you cannot guarantee it. There is no "optimization knob" to turn.

Transparency in training data is limited. It's not always clear which sources a given model weights most highly. LLM seeding must be based on strategic assumptions; that credibility, consistency, topical depth, and authoritative third-party mentions increase citation probability, rather than precise reverse engineering of an opaque process.

Attribution is genuinely hard. Zero-click AI mentions build brand awareness without generating measurable website traffic. This is real value. But it won't show up cleanly in your analytics. Proxy metrics like branded search volume increases, direct traffic growth, and the quality of sales-qualified leads from AI-referred sessions help close the attribution gap.

Manipulation backfires. Publishing fabricated reviews, seeding false statistics, or attempting to game AI outputs with deceptive content tends to backfire as models improve at detecting manipulation. The sustainable strategy; accurate, helpful, well-structured information across authoritative sources, also happens to be the ethical one.

Turning AI Citations into Revenue

Being cited by AI is worth nothing if the experience ends there. The high-converting nature of AI-referred traffic comes precisely because those visitors arrive pre-qualified. But you still need to capture them when they land. This is where a conversational revenue layer solution like Weply, experts in lead conversions, is essential.

Across 13 months of data, LLM referrals delivered an approximate 18% conversion rate. Higher than any other traffic channel including paid search, SEO, and direct. But this traffic is also growing from a small base, meaning you can dramatically improve revenue outcomes through relatively small increases in citation frequency.

The practical implication: when AI-referred visitors land on your site, they are more likely than any other cohort to be evaluating a purchase or seriously considering your solution. Ensure landing pages reflect the context they arrived from. If AI mentions you in the context of a specific use case, your landing page should speak to that use case immediately. Use contextual CTAs , chat and avoid treating them like cold traffic who need extensive education about your category.

AI-generated awareness delivers high-intent visitors; real-time chat captures their intent before it dissipates.

FAQs About LLM Seeding

How long does LLM seeding take to show results? For training-data-based models like ChatGPT and Claude, expect 3–6 months before content influences citations consistently. Real-time retrieval tools like Perplexity can respond to new content within days. Start with Perplexity to see faster feedback loops while building long-term foundations for training-based visibility.

Is LLM seeding the same as SEO or the same as AEO? Neither, though they're related. LLM seeding is a specific tactic that sits within AEO (Answer Engine Optimization), which is itself a broader discipline than traditional SEO. SEO optimizes for clicks and rankings in search engines. AEO optimizes for citations and authority across all answer surfaces; voice search, featured snippets, knowledge panels, and AI tools. LLM seeding is AEO's most forward-looking workstream: it focuses specifically on influencing what large language models know and recall about your brand, through deliberate content placement in the sources AI learns from. Almost 90% of ChatGPT citations come from pages ranking position 21 or lower in Google, which illustrates why LLM seeding requires its own logic, separate from, though complementary to, traditional SEO.

Does LLM seeding work the same way across ChatGPT, Claude, and Perplexity? No, and this distinction matters. ChatGPT and Claude rely more heavily on parametric (training) knowledge and are harder to influence in real time. Perplexity is primarily a RAG-based engine that retrieves fresh content during every query, making it more responsive to recent, well-structured content. Google AI Overviews blend both pathways. A diversified strategy including consistent web presence plus structured, crawlable content increases citation probability across all platforms.

Can small businesses compete with large brands for AI citations? Yes, particularly in niche verticals. Consistency matters more than budget. A small business that publishes specific, well-structured answers to underserved questions, earns genuine customer reviews, and participates authentically in community forums can outperform a larger competitor that publishes generic content. Identify the specific, long-tail prompts your customers ask AI, and own those thoroughly rather than competing on broad, high-volume queries.

How do I correct inaccurate information an AI tool is spreading about my brand? Publish accurate, well-sourced content across authoritative platforms to establish the correct narrative. The more consistently credible sources reflect accurate information, the faster AI systems update. For critical corrections, prioritize Wikipedia (if applicable), major review platforms, and earned media coverage. These carry the most weight in both training data and real-time retrieval.

Is LLM seeding ethical? Yes, when it focuses on making truthful, helpful brand information accessible. The goal is ensuring AI can find and verify accurate information about your brand, not deceiving users or gaming AI with false claims. Creating genuinely useful content, earning authentic reviews, and building real third-party credibility are the tactics that work, and they happen to be entirely ethical.