The AEO Funnel: Why AI Search Sends Fewer Visitors but Higher Intent

TL;DR:

AI search isn't killing your traffic. It's compressing your funnel. The research, comparison, and consideration stages of the buying journey now happen inside AI conversations before a visitor ever reaches your site. What arrives is for many brands often slightly fewer visitors, but with dramatically higher intent. AI-referred traffic converts at 14.2% versus 2.8% for traditional organic search. This article introduces a new measurement framework called AEO Traffic Quality (ATQ) with five metrics that actually capture what that's worth: time to conversion, pages per visit, source-segmented conversion rate, demo request rate, and chat interaction rate. The strategic implication is simple: static websites are designed for browsers. AI search is sending you buyers. Most companies aren't set up to tell the difference.

Picture a marketer on a Monday morning. GA4 is open. Organic traffic is slightly down. Nothing catastrophic, but enough to notice. Enough to send a Slack message. "Is AI search killing our traffic?"

Here's the thing. That question is based on a false premise, and the false premise is doing real strategic damage. A recent large-scale study by Graphite and Similarweb, analysing over 40,000 of the largest sites in the US, found that organic search traffic is down around 2.5% year-over-year. Not 25%. Not 50%. The apocalyptic numbers circulating in marketing circles turn out to be driven by surveys, small biased samples, and a healthy dose of frequency illusion. The cognitive bias that makes things you've recently noticed seem everywhere.

So SEO isn't dead. Traffic isn't collapsing. But something is changing. And it's more interesting than a traffic decline. The volume is roughly stable. The visitor profile isn't.

That same marketer staring at their GA4 dashboard hasn't noticed what's buried in the data: AI-referred visitors convert at an average of 14.2%, compared to 2.8% for traditional organic search. That's not a rounding difference. That's a structural one. Not in how many people are arriving, but in who they are and why they clicked. The traffic panic is based on bad data. The real story is better. And almost nobody is measuring it properly.

The Funnel Didn't Disappear. It Moved.

To get what AI search is actually doing, you need to hold two funnels in your head at the same time.

The traditional search funnel:

Search → Click → Browse → Compare → Consider → Convert

A visitor arrives knowing they have a problem, but not much else. They don't know the solution, don't know the provider, maybe don't know the budget. Your website has to do all of it: educate them, build credibility, introduce the product, handle objections, and nudge them toward a decision across multiple sessions over days or weeks.

The funnel is long because the website owns the journey.

The AI search funnel:

Ask → AI Conversation → Recommendation → Click (for validation) → Convert

Now something's different. That visitor had a conversation. With ChatGPT, Perplexity, Gemini or in AI Overviews in Google. One of the dozens of AI tools now woven into how people research and decide. The AI asked them questions. It compared options. It absorbed their constraints like budget, timeline, specific requirements, and gave them a recommendation. Your brand came out of that conversation as a credible answer to their specific problem.

The research stage happened inside the AI. So did comparison. So did consideration. What arrives at your website isn't the start of a buying journey. It's the end of one.

This is the reframe everything else hangs on: AI search doesn't compress the conversion rate. It compresses the funnel. The visitor who clicks through has already done what your website was designed to make them do. They don't need educating. They don't need nurturing. They need one thing: confirmation that the AI got it right.

The Data That Proves It

Three independent bodies of evidence. All pointing in the same direction. The volume story is calmer than the headlines suggest.

The Graphite/Similarweb study found that AI Overviews only appears on roughly 30% of queries, not the 50% figure that's been widely reported. And when it does appear, it's mostly on informational keywords. Commercial and transactional queries, the ones that actually drive revenue, are largely unaffected. Google itself stated in August 2025 that total organic click volume to websites has been "relatively stable year-over-year." Traffic to Google actually grew 1.4% in Q4 2025 versus Q4 2024.

So yes, when an AI Overview is present, click-through rates do fall from 15% to 8%. That's a real reduction. But it happens on fewer queries than assumed, and on the queries where commercial intent matters least. The panic overstates the volume problem by a significant margin.

The conversion gap is not subtle. Where things get genuinely interesting is what happens to the visitors who do come through.

That 14.2% conversion rate for AI-referred visitors holds across platforms. Claude-referred visitors at around 16.8%, ChatGPT at 14.2%, Perplexity at 12.4%. Different tools, different audiences, different use cases. Same structural pattern. Every AI referral source converts at dramatically higher rates than organic search's 2.8%.

So here's the arithmetic that most marketing teams haven't done yet: if overall volume is roughly flat but a growing slice of that traffic converts at five times the rate, the value per session is rising even as the panic about declining sessions continues. The headline number is misleading. The unit economics are improving.

And then there's the Microsoft Clarity data, which is hard to argue with. Analysing over 1,200 publisher and news websites, Microsoft found that Copilot-referred visitors converted at 17 times the rate of direct traffic and 15 times the rate of standard search traffic. Not 17% better. Seventeen times the rate. And Adobe's analysis of AI referral sessions found a 23% lower bounce rate, 12% more page views, and 41% longer time on site compared to other sources.

These visitors aren't bouncing and leaving. They're reading. They're exploring. They already care when they arrive. The downstream story compounds it further. AI-sourced customers generate more referrals and cancel less often than customers acquired through other channels. Funnel compression doesn't just produce faster conversions. In many cases, it produces better ones.

Introducing AEO Traffic Quality

Here's the problem. Every standard analytics metric your team reviews was designed for the traditional funnel. A visitor who arrives early, needs time, and should be nudged gradually toward a decision. Session duration. Pages per visit. Assisted conversions. Multi-touch attribution models. These benchmarks were built for a different visitor. Apply them to AI-referred traffic and you'll misread your best sessions as your weakest ones.

What's needed is a different measurement lens. Call it AEO Traffic Quality (ATQ). 5 intent-centric metrics that replace volume-centric thinking when you're evaluating the visitors AI search is sending.

1. Time to Conversion

AI-referred visitors don't come back next week. 73% of them convert within their first session. Not first visit, first session. If your attribution model is built around 7-day or 30-day windows and multi-touch journeys, you're likely underreporting the value of AI-referred traffic simply because the conversion happens too fast for your model to catch it properly. Shorten the window. Look for same-session conversions specifically.

2. Pages Per Visit (Lower Is Normal and Good)

An AI-referred visitor who views two pages and books a demo is not a low-quality session. They came to confirm one thing, confirmed it, and acted. That's the funnel doing exactly what it should, just in a compressed form. Measuring pages per visit without segmenting by traffic source is one of the most common ways teams accidentally misread their most valuable visitors as their least engaged.

3. Conversion Rate, Segmented by Source

Never aggregate AI-referred traffic into your overall conversion rate. A blog reader and a Perplexity-referred visitor arriving at your pricing page are not the same visitor. They shouldn't share a benchmark. Build separate segments in GA4 for each AI platform, and track conversion rates independently. The variance between sources will tell you which platforms are sending the most commercially qualified traffic for your specific product.

4. Demo Requests and Direct Sales Inquiries

AI-referred traffic concentrates on bottom-funnel pages like pricing, case studies, comparison content. These visitors are the most likely on your site to reach a high-intent CTA. Demo requests, quote requests, consultation bookings. These should be your primary ATQ indicators. If these numbers are rising while session volume is flat, that's the funnel compression working correctly. Don't let aggregate traffic trends bury the signal.

5. Chat Interaction Rate

This one's specific to the AEO era. An AI-referred visitor who opens a live chat is not a support ticket. They're one conversation away from a decision. Tracking chat engagement rate separately for AI-referred traffic is the most direct leading indicator of conversion you have. A real-time read on whether your site is continuing the dialogue these visitors already started, or breaking it. Get those insights and the chat conversion expert team to handle it 24/7 with Weply.

The instinct when traffic looks flat or slightly down is to optimise for volume. More content, more links, more reach. In the AEO era, that instinct is often backwards. The better question is: are you converting the visitors already arriving at the rate their intent deserves? For most teams, the answer is no. Not because the visitors aren't there, but because the measurement isn't catching them.

How AI Visitors Actually Behave on Your Website

The behavioural gap between an AI-referred visitor and a traditional organic visitor is large enough that it effectively requires different on-site infrastructure to convert. Understanding why means understanding what these visitors are actually doing when they land.

They arrive at the end of the journey, not the beginning.

They've researched. Compared. Had their specific constraints like budget, use case, and scale, absorbed by an AI that then gave them a personalised recommendation. They arrive carrying context your website cannot see and your analytics cannot capture. They're not looking for an introduction to your product. They're looking for confirmation that the recommendation was right.

Gartner's research found that 83% of the B2B buying journey now happens before a buyer speaks to a salesperson. Forrester found that nearly 90% of B2B buyers now use generative AI tools during the purchase journey. What's arriving at your website isn't the top of the funnel. It's whatever remains after the funnel has already done most of its work elsewhere.

They skim for proof, not explanation.

Not hero banners. Not origin stories. They scan for the signals that close decisions. Case studies from their industry, pricing clarity, specific feature confirmation, social proof from peers in situations similar to theirs. This is why AI-referred traffic lands disproportionately on pricing pages and case study pages. These are the pages that answer the one or two remaining questions standing between a provisional decision and a final one.

They're fast. And they don't give second chances.

Companies that respond to a lead within five minutes are 21 times more likely to qualify them than companies that wait 30 minutes. This research is a decade old, but it applies to AI-referred visitors with even more force. A high-intent visitor who arrived after a thorough AI conversation, found no live chat support, submitted a contact form on a Thursday evening, and received a reply Monday morning , hasn't waited. The same AI that recommended you also recommended two alternatives. They're already in a conversation with one of them.

This is the evidence behind what we argued in The Website You Built Wasn't Designed for the Visitor AI Search Is Sending You. That article named the problem. These are the mechanics that explain it.

Static Sites Are Built for Browsers. AI Visitors Come to Finish a Decision.

Traditional website architecture is built on a browsing assumption: visitors arrive early, need information, and should be moved gradually toward a commitment across multiple sessions. Every page is a step in a sequence. Every CTA is an invitation to go a little deeper. The whole structure assumes a visitor who needs to be won over.

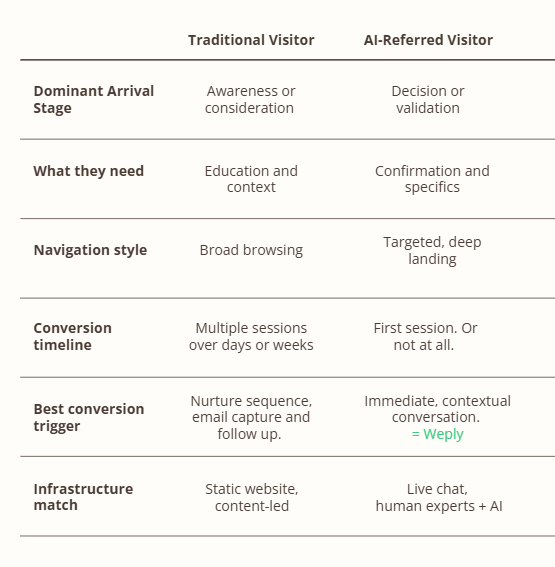

The AI-referred visitor doesn't fit that model. At all. They've already been through something like your funnel — inside the AI conversation that recommended you. They don't need the sequence. They need the answer. And they need it in a format that matches the conversational experience that just earned their trust. Here's what the mismatch looks like in practice:

This is why conversation beats navigation for AI-referred traffic. Not as a philosophy. As a practical response to how these visitors actually behave. They arrived in dialogue mode. The AI that referred them created an expectation of responsiveness, of personalisation, of back-and-forth. A static landing page breaks that expectation the moment they land. A contact form asking them to wait 24–48 hours for a reply doesn't just fail to meet their intent. It directly contradicts the experience that brought them to your site in the first place. What these visitors need is a continuation of the conversation. Not a brochure.

The Measurement Mandate: You Can't Optimise What You Don't Measure

Only 16% of brands currently track AI search performance in any systematic way. Which means 84% of companies are making decisions about their website, their conversion infrastructure, and their content investment without the data that would tell them where their highest-value visitors are coming from, or what's happening when those visitors arrive.

Three changes close most of that gap.

A. Segment your analytics by AI referral source. In GA4, create dedicated segments for chatgpt.com, perplexity.ai, claude.ai, and gemini.google.com. Not as a single "AI traffic" bucket. Separate segments. These platforms send different audiences with different intent profiles. Mixing them into aggregate organic or referral traffic makes them invisible, which means their exceptional conversion rates get averaged away by lower-intent sources.

B. Build an ATQ dashboard and review it separately. The five AEO Traffic Quality metrics: time-to-conversion, pages-per-visit by AI source, conversion rate by platform, demo/inquiry rates, and chat engagement rate, should live in a standalone reporting view. Reviewed weekly. Alongside, not inside, your overall traffic report. The goal is a clean, consistent read on what AI-referred traffic is actually worth, without having it diluted by site-wide benchmarks built for a different visitor.

C. Audit your highest-intent pages for the AI-visitor experience. Pricing pages. Comparison pages. Case studies. For each one, ask a single question: if someone arrived here having already provisionally decided to buy, what is the one remaining question standing between them and a yes, and is there a fast, human way to answer it? If the answer is a contact form and a two-day wait or an AI support chatbot, you already know what to fix.

The Funnel Didn't Shrink. It Sharpened.

Back to that marketer on Monday morning. Traffic is roughly flat, maybe down slightly. The GA4 line is uninspiring. The instinct is to panic, or at least to treat the situation as a volume problem requiring a volume solution. But the Graphite data resets the premise. Overall search traffic is down only 2.5%. AI Overviews appears on 30% of queries and concentrates on informational, not commercial, intent. The traffic apocalypse isn't happening.

What is happening is a quiet but significant shift in visitor composition. A growing proportion of the people arriving at your site came through an AI conversation. They arrive later in their decision process, with higher intent, and with conversion rates that dwarf anything traditional organic search ever produced. The funnel hasn't collapsed. It's compressed. And what comes out the other end is more valuable, not less.

The question was never whether AI search kills traffic. It's whether your measurement, your on-site experience, and your conversion infrastructure are built for the visitor who survived the compression. Fewer browsers. More buyers. Most websites were designed for the ones who no longer show up.

This article is part of Weply's ongoing series on Answer Engine Optimisation. Read the earlier pieces: How to Get Your Brand Into Google AI Overviews, LLM Seeding: The Complete Guide to Getting Your Brand Cited in AI Search Results, and The Website You Built Wasn't Designed for the Visitor AI Search Is Sending You.

Frequently Asked Questions

What is AEO Traffic Quality and how do I measure it? AEO Traffic Quality (ATQ) is a set of five intent-centric metrics designed to capture the value of AI-referred visitors rather than their volume. The five are: time-to-conversion, pages per visit (segmented by source), conversion rate by AI platform, demo and direct inquiry rates, and chat interaction rate. To measure it, create dedicated GA4 segments for each AI referral source like grok.com, chatgpt.com, perplexity.ai, claude.ai, gemini.google.com, and track conversion behaviour independently for each, with a shortened attribution window to capture same-session conversions that multi-touch models tend to miss.

Why is my conversion rate higher but my traffic lower since AI Overviews launched? This is funnel compression in action and it's the expected pattern, not a contradiction. AI Overviews and AI search tools are absorbing the early research and comparison stages of the buying journey. Visitors who click through have already been through a version of your funnel inside the AI conversation. They arrive later in their decision process, with more specific intent, and convert at significantly higher rates. It's worth noting, too, that recent large-scale research suggests the traffic decline may be smaller than commonly reported. Around 2.5% overall, not the 25%+ figures that have circulated.

Should I optimise for traffic volume or conversion rate in the AEO era? Conversion rate, specifically for AI-referred visitors as a distinct segment. Optimising for volume in a period when AI search is delivering higher-intent sessions at stable overall volume risks chasing the wrong metric. The more valuable question is whether you're converting the AI-referred visitors already arriving at the rate their intent warrants. For most sites, the infrastructure gap is larger than the traffic gap.

How do I track visitors from ChatGPT and Perplexity in Google Analytics? In GA4, go to Explore and create segments using the Referral source condition. Add chatgpt.com, perplexity.ai, claude.ai, and gemini.google.com as separate filters preferbly, or group them into a single "AI Referral" segment. Bear in mind that some AI-referred visitors arrive as direct traffic if the referral header is stripped. UTM parameters on any links you control can supplement referral tracking. Cross-reference with an AI visibility platform for a fuller picture.

What does "funnel compression" mean in AI search, practically? It means the research, comparison, and consideration stages of the buying journey are now happening inside AI conversations, before the visitor ever reaches your website. By the time someone clicks through from an AI recommendation, they've already been through a version of your awareness and consideration funnel. What the website receives is the final stage: validation and decision. In practice: fewer sessions, shorter visits, higher conversion rates, and a requirement for immediate contextual engagement rather than long-form nurturing.

Does AI-referred traffic convert better than paid search? In many cases, yes. In particular for high-consideration purchases. Paid search achieves high intent through targeting criteria set at the campaign level. AI-referred visitors arrive having already moved through an equivalent qualification process inside the AI conversation, carrying a specific recommendation and residual trust from that exchange. The most meaningful comparison isn't channel-level aggregate rates. It's the intent level of an AI-referred visitor at the moment they land versus a paid visitor at the equivalent decision stage. And on that basis, AI referral tends to win.